Facebook Made You Look, Again

The algorithm is active and awful

11/24/2021

The first article I wrote about Facebook was published three years ago. “The Influence of Facebook,” focused on data collection and the algorithms the platform deploys to manipulate its users. Sadly, since then, the situation has only gotten worse. The audacity of Facebook executives is alarming. They continue to claim that users control their own experience while they blatantly manage the reigns to restrict the overall accessibility, availability, and distribution of content to each and every single one of its users.

As a member of the National Society of Leadership and Success, WGU Chapter, I was recently offered an opportunity to ask Facebook’s Chief Operations Officer, Sheryl Sandberg, questions during a scheduled speaker broadcast. I was eager to discuss these topics with her but unfortunately, she canceled. I was going to put my questions in writing and send them certified mail to see if I would get any kind of response, but then came the announcement of META and the expansion of the Facebook empire with their Virtual Reality Labs and Family of Apps. Clearly, she has her hands full at the moment.

The questions I have are:

- What criteria is considered when making operations decisions that will have a global impact?

- What actionable steps and preventative measures does Facebook have in place to reduce the amount of bias content distributed to each of its users on the platform?

The powers of persuasion are hard at work in the technology that is utilized to operate a social media site. When it comes to sharing News, Facebook explains on its website that the algorithms they use evaluate signals to determine if an article may be important or of interest to you. That’s right. They decide right off the bat if you are likely to read and share an article or not. They pick what you see and what you don’t. You have no choice, and they can only make assumptions with use of their artificial intelligence.

The algorithm was designed to box up and compartmentalize our minds, theoretically and physically. These signals include a main criteria component of location. We are most likely to see only information from our local surroundings as opposed to what is going on nationwide or globally. The executives bolster about how the platform connects communities. To the contrary, behind the curtain, the basic structure of their systems actively and deliberately work to disconnect communities from the world at large as much as possible. In fact, it is priority.

To start, the algorithm is meant to feed us local content only. Back in 2020, it was reported from investigations performed in the UK that Facebook was found to be responsible for an astonishing 94% of 69 million reports of child sexual abuse images online (USA Today, 2020). Unfortunately, this did not cause them to make very many changes. In January 2021, a meme began circulating warning parents that a search of any given letter of the alphabet on Facebook returned nothing but pornographic content in video results.

This was true. I know because I tried it for myself and saw the results for my own eyes, on my own computer, under my own profile (Spradlin, 2021). As previously stated, the algorithm is designed to feed each user local content first. This is where the problem begins. The map below shows a visual of the registered sex offenders within a 10 mile radius of my hometown. Please note that it is completely filled.

I wonder, how many of these offenders use social media? How many of them create and share explicit sexual content? How much of it is regurgitated by the signals and ranking system of the platforms artificial intelligence? How does end-end encryption make children even more at risk with apps like Facebook Messenger for Kids or the newly announced endeavors? How can local law enforcement agencies protect citizens from this type of cyber danger? I do not know the answers to these questions. When I tried to research more into this subject, I was inconveniently met by an error message stating that Michigan’s sex offender registry, at the state government level, is no longer in service.

Therefore, to gain more insight and put this into a bigger perspective, I used myself as a guinea pig user to start researching more into the algorithm and how it acts on my behalf. Since Facebook is constantly under scrutiny for sexual content, I made that my target focus and I designated memes as my variable tool. After just one day of sharing and saving memes of a sexual nature, my news feed was flooded with more, and more, and more. The kicker is that it wasn’t just more sexual memes showing up in my news feed. There were also sponsored ads for sex toys, sexy outfits, dating apps, etc. You name anything related to sex and I have probably seen it by now. I find this extremely alarming.

Unfortunately, it’s a downward spiral from here on out. As the second step towards “giving users control” Facebook has established a ranking system for content. This means that after the algorithm decides on your behalf what you might like to see in your news feed, it then scores content on a scale such as a 1-10. The higher the score, the more likely it is to be displayed in your feed. The lower the score, the less likely you are to ever come across it on the platform. Every time you react, comment, or share anything on Facebook, the score for that particular category of content goes up. Any time you scroll past something without any engagement, the score goes down.

Lastly, they claim that this process empowers users because they have the option to “hide” content that they are fed and do not like. However, in layman’s terms, it seems to be poor manners to force feed someone a meal when they are not hungry until they vomit. There is no option of seeking truth and knowledge when an artificial machine is constantly feeding bias information. As users, we have no input as to what the algorithm outputs. We only have the option, after it’s been put in front of us, to say no thank you.

Still, hiding what you don’t want to see also plays into the abilities of the algorithm to manipulate the minds of the masses. Even if a user clicks to remove or hide the content, that action is still considered engagement. As a result, it still increases your time spent on the platform, which is the ultimate objective and goal of the algorithm in the first place. So, if you hide or remove too many things, soon enough the algorithm will start to catch up and continue to feed you that type of content, purposely, to maintain your level of interaction and time spent on the platform.

This automatic, artificially intelligent activity does not just surround sexual content or only memes. I used them as an example for the purpose of my own research, but it also extends to manipulate everything; entertainment, news, research, information, shopping ads, promotional offers, personal status updates and posted photos. This influences how we think and how we feel, which consequently impacts all that makes us human. I am talking about those quality factors that combine specifically to make each of us individually unique: personality, character traits, moral values, communication style and cultural behaviors. Like our fingerprints, this cannot be duplicated.

Moreover, the powers of persuasion are even more persuasive and pervasive when pushed heavily onto a large population. There is strength in numbers and the audience of Facebook stands at nearly 3 billion monthly users worldwide (Statista.com). Zuckerberg and company are more like puppeteering master manipulators because behind the scenes they are pulling the strings on the world wide web that they have weaved over the minds of mankind. No two people on the planet are exactly alike and yet, this algorithm directly hinders our ability to think for ourselves.

They operate their business model as if we are all lonely sheep needing to be guided into our correct herds. While the service might be free, I fear of how much it is actually costing us in terms of civility and humanity. Coincidentally, the sci-fi film, Divergent, depicts a world where personal traits and characteristics determined which societal faction a person belonged to. Is it possible that this is not just science fiction after all? What if, instead, it is a creative and artistic prophecy of what is to come for us in reality, if we do not take heed to save what is left of our civilization as we know it?

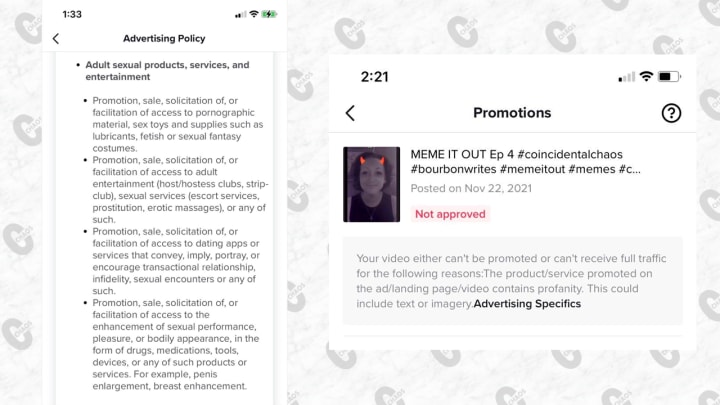

To showcase and document my experience with researching the algorithm using sexual memes, I created a comedy clip show out of them on my TikTok account called, “Meme It Out.” Honestly, it was so bad and weighed on me so heavily that I just had to find a way to laugh about it and lighten the download. Seriously, I hope it brings more awareness to what is actually taking place right under our nose. Ironically, when I tried to promote the show and purchase and ad for it, I was told I was in violation of their policies because of my sexual content and profanity.

Yet, everything I used was suggested to me, by their artificial intelligence...

Make it make sense. Please check it out here!

References

“Facebook Papers reveal company knew it profited from sex trafficking but took limited action to stop it.” Oct 27, 2021. USAToday.com. https://www.usatoday.com/story/news/investigations/2021/10/27/facebook-profited-sex-trafficking-did-it-break-the-law/6124317001/?gnt-cfr=1

Gillespie, Tom. Oct 12, 2020. “Facebook responsible for 94% of 69 million child sex abuse images reported by US tech firms.” https://news.sky.com/story/facebook-responsible-for-94-of-69-million-child-sex-abuse-images-reported-by-us-tech-firms-12101357

“Meme It Out.” Coincidental Chaos, 2021. https://www.tiktok.com/@coincidentalchaos?

“Number of daily active Facebook users worldwide as of 3rd quarter 2021.” Statista.com. https://www.statista.com/statistics/346167/facebook-global-dau/

Spradlin, Amanda. 2018. “The Influence of Facebook.” https://vocal.media/01/the-influence-of-facebook

Spradlin, Amanda. 2021. “Facebook Under Fire.” https://vocal.media/theSwamp/facebook-under-fire

About the Creator

Amanda Spradlin

Amanda Spradlin is the founder of Coincidental Chaos. She writes with the passion of a questionable mind. Any donations are appreciated!

Comments

There are no comments for this story

Be the first to respond and start the conversation.