Thinking About Nash’s Equilibrium

Can It Help With My AI Build?

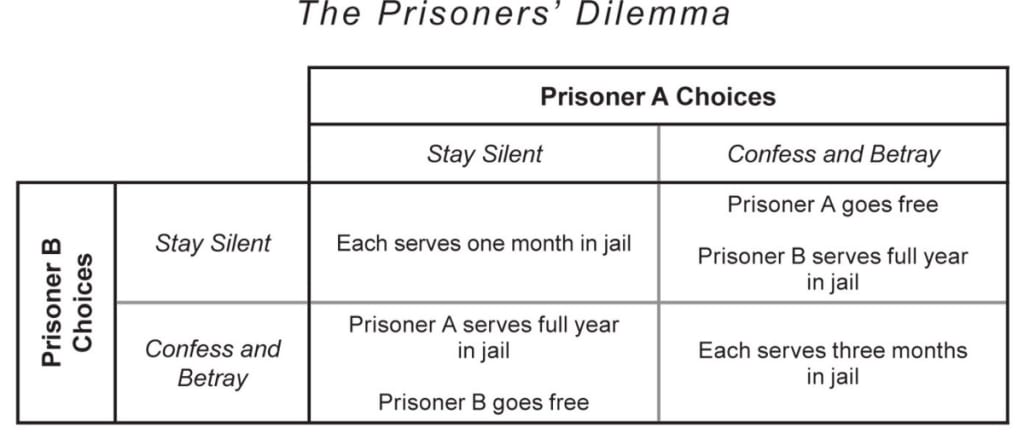

Maybe somebody out there can help me understand something I never understood about the prisoners dilemma. Why exactly is confess and betray considered a bad (the worst) decision for the collective? Neither member is stuck with a year in jail. Sure it’s not the best outcome of just 1 month but 3 months is a hell of a lot better than a year.

Is it simply because in terms of the options for “the collective”, in this case both prisoners, there are really only two options, 1 month or 3 months? The other options only apply to each prisoner individually so even though each individual outcome may be worse “the collective” is not impacted because it is essentially invisible or not a logically existing entity for purposes of the evaluation of each choices impact?

If that is the rationale I have to say it seems weak. It derives all of its power from the definition of collective. That definition is societal driven, not logically or mathematically driven, unless you deem any group of persons consisting of two or more people a collective. In the case of prisoners maybe that is OK since they share a common identity, and in the case of the dilemma a common purpose , to minimize their time spent in jail, but what about myself and some random 6 month old baby girl in New Zealand. Are we a collective? The only traits we would seem to share of relevance are that we are both human (and therefore have many similar physical, mental and physiological attributes) and we both inhabit the planet earth. Now of course a six month old baby is not capable (I don’t believe) of rationale decision making and thus one could argue not capable of engaging in a non-cooperative game of the type for which Nash’s equilibrium applies. Certainly the 6 month old would not be capable of knowing the equilibrium strategies of the other(s) player(s).

It seems as if Nash’s equilibrium or more precisely various forms of Nash’s equilibria only apply to rationale, intelligent, (things?) capable of making decisions individually or collectively and participating in non co-operative games. Therefore if any (thing?) is proven to be in a game in which Nash’s equilibrium applies it must be rationale and intelligent. We are able to calculate the reality of Nash’s equilibrium in any game using experimental economic methods. Therefore we should be able to calculate if a posited AI in a game with an intelligent human is truly intelligent by proving the reality or not of Nash’s equilibrium in that game.

It may not help us build an AI but it could be very useful in allowing us to determine if what have have built is the real deal or not. It may even allow us to test variations of our AI build to “optimize” for intelligence through an iterative process of gaming vs intelligent persons, refine build, evaluate proof of Nash’s equilibrium, refine based on results, build, game, repeat until proof is satisfied. Obviously many other people have thought along similar lines and so read on…

Various groups have been trying to make use of different aspects of game theory in the development, training, testing, and other areas in the AI research area for quite some time now. As with most things AI there has been some halting progress made but Brainiac 2 has not yet emerged from his silicon home to save the world or take it over or even to accept my orange challenge. That said it seems a promising area for further study and I have been thinking about Nash’s equilibrium some and how I might use it if I were attempting to build an AI, which I most definitely am not, definitely.

Leaving aside all the computer stuff for a moment and taking things from a purely human perspective, why is it that a Nash equilibrium or more precisely various types of Nash equilibria only apply to persons capable of making decisions? Why would what is essentially a mathematical formulation only apply to (intelligent?), (conscious?), persons of decision making age and maturity level. Seems awfully specific. To clarify some it does not have to be just persons, institutions e.g. can also be described by the equilibrium. However, unless I am not understanding some critical piece, people need to be involved in some way shape or form. In other words two computers matching up head to head in a game cannot be described by Nash’s equilibrium (or can they?)

Dammit I am stuck here. I am not good enough at math. Do not know enough about the research in this area, or maybe I am just plain not intelligent enough to go on. If only I had an AI to help me. Wait a minute that just gave me an idea, I should build an AI, oh right, working on it, stuck, dammit….Lol

About the Creator

Everyday Junglist

Practicing mage of the natural sciences (Ph.D. micro/mol bio), Thought middle manager, Everyday Junglist, Boulderer, Cat lover, No tie shoelace user, Humorist, Argan oil aficionado. Occasional LinkedIn & Facebook user

Comments

There are no comments for this story

Be the first to respond and start the conversation.