Do algorithms amplify or erode our humanity?

Making sense of the Information Age

In 2015, a group of scientists published an unusual study regarding the accuracy of cancer diagnoses. In order to assess the breast cancer risk, 16 testers were provided with touch-screen monitors and asked to sort through breast tissue images. The pathology samples were obtained from women whose breast tissue had been harvested by biopsy, sliced thinly and stained with chemicals to highlight the blood vessels and milk ducts in red, purple, and blue. In order to determine whether the cancer was lurking among the cells, all the tester had to do was examine the pattern in the image. They were put to work immediately after a brief training period, resulting in impressive results. Based on their independent assessments, 85 percent of samples were correctly classified. However, they then noticed something interesting. The accuracy rate increased to 99 per cent when they gathered the answers from all the testers and combined their votes. In this study, the testers’ skills were not what was truly remarkable, it was their identity. These plucky lifesavers were neither oncologists, pathologists nor nurses. They were not even medical students. They were pigeons.

Even the scientists behind the study didn’t suggest that doctors should be replaced by pigeons — my understanding is that their job is safe for some time yet. Nevertheless, the experiment does illustrate the point that pattern spotting is not a skill unique to humans. If a pigeon can manage it, why should an algorithm not be able to?

Throughout modern medicine, finding patterns in data has played a major role in defining its history and practice. Observation, experimentation, and data analysis have been fundamental to the fight against the disease for roughly 2,500 years, beginning with Hippocrates’ creation of the school of medicine in ancient Greece. He was the father of modern medicine, earning the title ‘the father of modern medicine’ for establishing case reporting and observation as a science. Our knowledge of science may have taken many wrong turns throughout history, but progress is made every time we recognize patterns, classify symptoms, and predict what the future holds for a particular patient.

The history of medicine is full of examples. In 15th-century China, for example, healers discovered they could vaccinate against smallpox. They eventually managed to reduce the mortality rate from this illness by tenfold using a pattern discovered after centuries of experimentation. All they had to do is find someone who had a mild case of the disease, take their scabs, dry them out, crush them, and blow them into the nose of someone who was healthy. In the 19th century, while medicine’s methods became increasingly scientific, physicians’ jobs became increasingly concerned with finding patterns in data. Ignaz Semmelweis, a Hungarian doctor, was one of the physicians who discovered something startling about maternity ward mortality rates in the 1840s. In the course of giving birth, women who were assigned to doctor-supervised units were five times more likely to develop sepsis than mothers delivered by midwives. Also, the data uncovered a clue to the reason: doctors were dissecting dead bodies and then providing immediate medical attention to pregnant women without washing their hands first. Today, doctors throughout the world are experiencing similar adversity that was true in China during the 15th century and in Europe during the 19th century. Not just in examining diseases in the general population, but also when being a primary caregiver. Has this bone been broken or not? Can this headache be regarded as normal, or could it mean something more serious? Could this boil be cured by antibiotics? Pattern recognition, classification, and prediction are all part of the same problem. This is the kind of problem algorithms excel at solving.

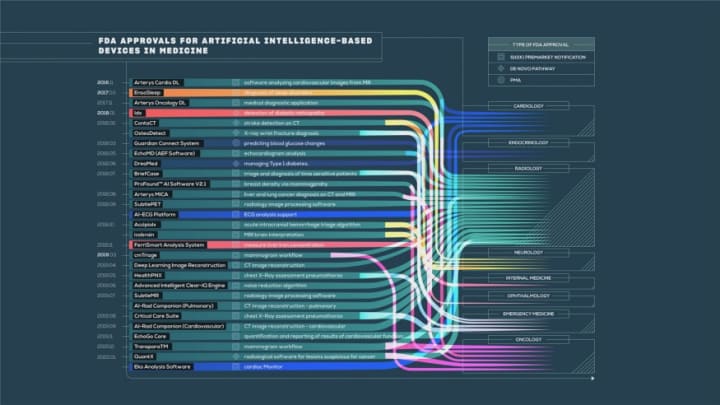

A computer algorithm won’t be able to replicate all of the aspects of being a doctor. For starters, empathy is an important quality for physicians and nurses. They need to show support to their patients when they encounter social, psychological or financial difficulties. However, algorithms have their place in some aspects of medicine. Especially in the medical field, where pattern recognition is found in its purest form, and classification and prediction dominate. Particularly for an area such as pathology. Rarely do patients encounter pathologists. It is usually the people in distant labs who will examine and write the report on a blood or tissue sample that you send off to be tested. At the end of the diagnosis line, their duties demand skilled attention, accurate measurements, and reliable results. Often, they are the ones who determine whether or not you have cancer. The biopsy they’re analyzing could be the thing standing between you and chemotherapy, surgery, or even worse. In their line of work, pathologists examine hundreds of slides every day, each of which contains tens of thousands of cells suspended between the small glass plates. Under the microscope, they must scrutinize samples one at a time for tiny anomalies that may hide anywhere within the galaxy of cells they see. Pathologists might achieve perfection if they meticulously examined five slides a day. The complexities of biology make their job much more difficult in the real world. We can return to the breast cancer example in which the pigeons were so successful. The answer to whether someone has the disease can’t be answered simply yes or no. Diagnosing breast cancer involves a broad spectrum. A benign sample includes normal cells that show up exactly the way they ought to. On the other end of the spectrum, we have the most invasive of all tumours — invasive carcinomas, which develop outside the milk ducts and spread to the surrounding tissues. Typically, cases that fall into these extremes are fairly easy to spot. Pathologists can correctly diagnose 96% of straightforward malignant specimens according to a recent study, which is comparable to what the flock of pigeons can accomplish when faced with a similar task. The diagram below illustrates a few other, more ambiguous categories, which fall between these extremes — between totally normal and clearly malignant. You will probably experience a considerable impact on your treatment based upon the classification of your sample. You could be offered anything from a mastectomy to no intervention, depending on where your test is on the line.

Unfortunately, figuring out how to distinguish these ambiguous categories is extremely challenging. There may be disagreements between even expert pathologists when it comes to the diagnosis of a certain specimen. In order to determine just how much the doctors’ opinions varied, 115 pathologists were asked to assess 72 biopsies of breast tissue that were deemed to contain benign abnormalities (a category in the middle of the spectrum). As alarming as that may sound, only 48 percent of the pathologists reached the same diagnoses. When your chances of making a diagnosis come down to 50–50, it’s almost like tossing a coin. In the face of such high stakes, accuracy is the most important factor. Is it possible for an algorithm to perform better?

Consider, for example, that you have a particularly bothersome cough and visit the doctor. The chances are that you’ll get better on your own, but if a machine were taking care of you, it might want to take an X-ray and a blood test just to be sure. Also, you would probably be given antibiotics if you asked. Even if only a few days of your suffering could be avoided, the algorithm might decide the prescription was worth it as long as it was solely tailored to the health and comfort of the patient. Nevertheless, if a machine is designed to serve the entire population, antibiotic resistance would definitely be taken into account. The algorithm would only give you drugs when you were in immediate danger, not when you were temporarily uncomfortable. Also, such an algorithm might be aware of wasting resources or long waiting lists, and thus not send you for further tests unless you demonstrated other symptoms of something more serious. When determining who should receive an organ transplant, a machine working on behalf of the entire population might prioritize saving as many lives as possible. A machine that is purely motivated by your interests might create a different treatment plan.

There’s no doubt the medical system is less fraught with tension because everybody is working toward the same goal — getting the patient better. Yet, even here, there are still subtle differences in the goals of each party. A new algorithm will always draw a distinction between privacy and the public good, individualism and population protection, and different challenges and priorities regardless of what aspect of life it influences. Even with better healthcare for all as the clear prize at the end, it is difficult to find a route through the complicated tangle of incentives. In the case of hidden incentives, it is even more difficult.

Often, algorithms are overstated in order to obscure their risks. In these instances, you should ask yourself what you’re being told to believe, and who stands to benefit from you accepting that belief.

The algorithm’s reach may end up having some limits set by us, at the end of the day. Certain things do not need to be analyzed and calculated. The sentiment could well apply to situations beyond the realm of normal life. Perhaps not because the algorithms themselves haven’t tried. But because — just maybe — there are some things beyond the dispassionate machine’s capability to comprehend. An algorithm’s performance is sensitive. Specificity is a human trait. Our goal should be to exploit their respective strengths.

Both algorithms and human brainpower have complementary capabilities that overlap, intersect, and contrast in numerous ways. Therefore, we ought to be careful not to stereotype either one as inherently good or bad. We need to always keep their real-world impacts in mind when we formulate plans to achieve an efficient, ethical future. After all, algorithms could amplify our humanity rather than erode it if we intend for them to do so.

About the Creator

Siddharth

I wear a story on my skin; of how I have been ripped with challenges and sewn back together with determination and perseverance.

Enjoyed the story? Support the Creator.

Subscribe for free to receive all their stories in your feed. You could also pledge your support or give them a one-off tip, letting them know you appreciate their work.

Comments

There are no comments for this story

Be the first to respond and start the conversation.