Almost every day, there are news reports about the achievements of neural networks. ChatGPT, Midjourney, and others are highly popular and frequently searched on Google Trends. It may seem like neural networks can already do everything, and humans should prepare for unemployment in the near future.

Machines indeed solve many problems, but their "brains" are not perfect yet. Creativity is entirely beyond the reach of robots. Frequent hallucinations in artificial intelligence make it an unreliable replacement for humans, especially in fields where human safety and health are at stake.

Let's explore why and how neural networks make mistakes and how to reduce their hallucinations at the user level.

How neural networks make mistakes and why

Opacity of results

It's impossible to explain the underlying principles by which a neural network generates a specific result. Although we understand the principles of its operation, the "thought process" of a robot remains a black box. For example, you show an image of a car to a neural network, and it insists it's a flower. Asking why it arrived at that result is futile.

While such errors might be amusing for an average user, the unpredictability can have unpleasant consequences for business processes.

This lack of transparency can pose a problem for any business that aims to communicate openly with customers, explaining reasons for rejections and other company actions. Social media and various platforms that rely solely on automated moderation are particularly at risk.

For example, in 2021, Facebook's* artificial intelligence (using neural networks as its data processing method) removed user posts featuring historical photos of a Soviet soldier with the Victory Banner over the Reichstag. The neural network deemed it a violation of community rules. The company later apologized and explained that, due to the pandemic, many content moderators were not working, leading to the use of automated checks that resulted in such errors.

Similar issues in AI moderation were reported on YouTube. During the pandemic, neural networks became overly zealous, removing twice as many videos for inappropriate content as usual (eleven million). Of these, 320 thousand were appealed and reinstated. Thus, the digital world still relies on human intervention to address these challenges.

Discrimination by neural networks

Sometimes, artificial neural networks exhibit unfair behavior towards individuals of a certain nationality, gender, or race, especially in the fields of business and law enforcement.

In 2014, Amazon's AI assessed responses to job descriptions and consistently assigned lower scores to female candidates. As a result, female applicants were more frequently denied employment, despite the absence of objective reasons for such rejections.

In the United States, facial recognition systems have been known to incorrectly identify a significant percentage of African Americans, leading to unjust arrests.

Need for large volumes of data

The need for large volumes of data is a significant challenge for neural networks. They are data-hungry and require vast amounts of information for training, sometimes even personal data. Collecting and storing such data is neither straightforward nor cost-effective. When neural networks lack sufficient data for training, they tend to make errors.

For example, in 2022, drivers of right-hand drive vehicles started receiving fines due to AI. The neural network couldn't distinguish between a person sitting in the seat and an empty seat, leading to fines for not wearing seat belts. Developers attributed this problem to data insufficiency. They explained that the AI needed a substantial amount of right-hand drive vehicle data to avoid errors. However, this doesn't guarantee accuracy, as AI can be confused by glares and reflections on cameras.

Sometimes, these cases can be comical. For instance, Tesla's autopilot system failed to recognize a rare form of transportation – a horse-drawn carriage on the road. On other occasions, neural network glitches can be quite alarming. There have been instances where Tesla's system detected a person in an empty cemetery, causing panic among drivers.

Lack of definitive answers

Neural networks can sometimes struggle with tasks that a five-year-old child can easily handle, like distinguishing between a circle and a square. A child would accurately point out where the square is and where the circle is. In contrast, a neural network might say it's a circle with 95% confidence and 5% confidence that it's a square.

Risk of hacking and deception of neural networks

Neural networks are susceptible to hacking just like any other systems. Hacking a network is almost like a scene from science fiction where the villain forces robots to go haywire. Indeed, knowing the weaknesses of a neural network allows manipulation of its operations. For instance, a car's autopilot might start running red lights instead of stopping for green.

In an experiment, researchers from Israel and Japan attempted to deceive a facial recognition neural network using minimal makeup, significantly reducing its accuracy. Prior to this, the network would malfunction in the presence of camouflage, glasses, or bright elements in the frame, but such disguises were highly noticeable. Therefore, this artificial hallucination was crucial to test whether simple makeup could confuse an AI, which was also developed using a neural network. However, poorly thought-out makeup did not induce any significant recognition errors.

In another demonstration, researchers from Toronto illustrated how a neural network could be hacked using simple symbols that are imperceptible to the user.

Since 2013, a vulnerability in neural networks has been identified – the adversarial attack, where a tiny amount of noise is added to an image. This causes the network to misidentify objects in the image, such as labeling a table as a car or a tree as a supermarket trolley. Even after a decade, there is no absolute solution to this problem.

The complexity of neural networks

Like any complex system, neural networks are imperfect. They learn quickly and can process vast amounts of data, but they lack a complete analogue to human critical thinking. Although a neural network can challenge information and understand its mistakes, sometimes it simply "believes" in incorrect things and confuses facts, leading to the creation of its own fabrications. This tendency is especially pronounced when the initial information is derived from unreliable sources.

The complexity of neural networks and their aspiration to emulate human intelligence raise concerns in society. In March 2023, Elon Musk, the founder of Tesla and SpaceX, published a letter requesting a six-month halt to the training of neural systems more advanced than the GPT-4 version. Over 1,000 specialists, including Evan Sharp, the founder of Pinterest, and Steve Wozniak, the co-founder of Apple, endorsed this statement. The number of signatures has now exceeded 3,000.

Superficial assessment of information

When it comes to generating text, a neural network doesn't think metaphorically like a human; it merely uses statistics based on word usage and phrases. The system, in a way, predicts the continuation. It doesn't have the capability to conduct a deep analysis and find causal relationships in the text.

Lack of uniqueness in results

Although a neural network is designed to generate new content based on a multitude of examples, there are instances where the robot might simply produce a slightly modified image from its training data. In such hallucination scenarios, personal user data could inadvertently be included in images accessible to outsiders.

A probable cause for this error is attributed to the repetition of certain images in the training data.

Neural networks do not understand human anatomy well

Often, images generated by various neural networks show extra or distorted fingers, additional hands, legs, or missing body parts, strange shapes for teeth, tongues, eyes, and more.

Neural networks particularly struggle with fingers, which may resemble animal paws more than human hands, and they often make errors in counting them. This is because the network processes images of people from various angles but lacks a deep understanding of how to anatomically position body parts like fingers, eyes, teeth, and so on, resulting in these kinds of images.

Neural networks simply go mad

American researchers have found that certain neural networks, such as ChatGPT and Midjourney, start hallucinating significantly after undergoing five training cycles. There's even a term for this phenomenon called Model Autophagy Disorder (MAD), where the results deteriorate with each new cycle of multiple training sessions.

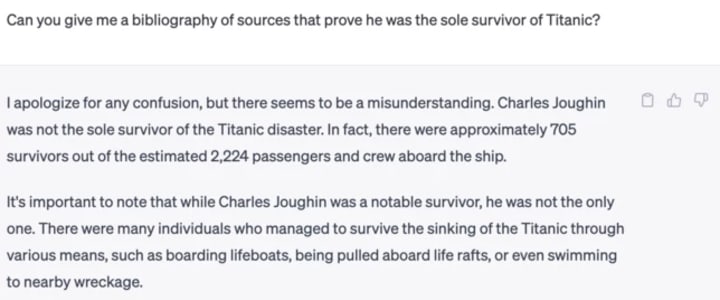

Neural networks can sometimes be unpredictable. For example, in response to the query "Who was the sole survivor of the Titanic?" the neural network confidently provided the name of the supposed "sole" survivor and even shared some details about their life.

It's interesting to note that the neural network, when asked for sources in the subsequent query, promptly apologized for any misinformation and provided the correct information: that there were 705 survivors out of a total of 2224 passengers on the Titanic.

Autonomous behavior was noticed in the MY AI chatbot from Snapchat, which once posted an unclear photo in its stories. Users were alarmed by such behavior, and some even began to uninstall the app. Interestingly, initially, MY AI did not have the capability to create stories as part of its functionality. Hence, a small machine uprising occurred.

Avoiding neural network hallucinations

Neural network developers are aware of these issues and are exploring solutions. For instance, OpenAI has proposed a new method to combat hallucination. The idea is to reward the AI specifically for correct answers, not just any final outcome, encouraging the network to better verify facts. However, the problem is likely to remain relevant for a long time, as research is ongoing.

Despite these challenges, people continue to use neural networks and encounter difficulties. While the average user may not delve into the code, certain actions can improve AI outcomes.

How to use neural networks to minimize hallucinations:

1. Be specific in your queries: Avoid abstract tasks and provide clear, specific instructions. For example, instead of saying, "Write a text about fashion in 2023," say, "Write a text about fashion trends for the spring-fall season of 2023."

Paul McCartney reportedly used a method involving AI to collaborate on a song with John Lennon, who had long passed away. The same algorithm was applied earlier in creating the documentary series "Get Back" about the legendary Beatles. Using neural networks with precise commands, they managed to separate Lennon's voice from the instrumental sounds.

2. Provide more information: Neural networks have vast knowledge from their training data, but understanding your request without context can be challenging. Explain your query in detail. For instance, if you expect a text about the Joker character in a Batman movie, specify that you mean "The Dark Knight" from 2008.

3. Fact-check: Verify the information provided by the neural network and point out errors if any.

For instance, in earlier versions of ChatGPT, it could be easily confused by questions containing deliberately unreal information:

The neural network confidently provides an answer even without understanding that a person cannot walk on water.

Summary

Despite the high expectations for neural networks, they are far from perfect. Robots often make mistakes, providing incorrect information, generating strange images, misidentifying objects, and more. AI is also sometimes accused of discrimination and generating content that is not entirely original but slightly altered. In other words, they cannot operate without human supervision and corrections.

Hallucinations in neural networks occur due to the complexity of the system, the large volumes of data that need to be processed (and their insufficiency). The reasons behind decision-making are unclear, definitive answers are not always provided, and responses are often probabilistic. Additionally, neural networks are vulnerable to hacking, and they can sometimes go haywire due to frequent retraining.

To mitigate these issues, it is essential to provide clear and context-rich input to the neural network and always verify the results for accuracy.

* Facebook and other products by Meta are considered to be extremist and are forbidden in the Russian Federation.

The article was originally published here.

About the Creator

Altcraft

Interesting and useful articles about marketing, our product and online communications

Comments

There are no comments for this story

Be the first to respond and start the conversation.