A Candle in the Dark: 9 Rules for Combating Bulls#!t

Our brains are wired for collective delusions, but simple tools can combat these frailties.

VISITING STONEHENGE is a magical experience: you can’t help but be influenced by its iconic status as one of the world’s most recognisable ancient monuments. But the stone circle also radiates a kind of mystical aura of its own.

What brought the ancients to this place as early as 10,000 years ago? Four large Mesolithic postholes were erected there around 8000 BC, three of them in an east-west alignment, uncovered by archaeologists beneath the modern-day carpark.

The stone circle we see today was probably erected around 2500 BC, although the crescent of bluestones at the centre may have been transported from 250 km away and installed in their present alignment as early as 3000 BC. This makes it as old (or maybe older) as the first Egyptian pyramid, the step pyramid of Djoser, built around 2620 BC.

Unlike the Egyptians, we know almost nothing about Stonehenge and its creators, or even how it was used. That is part of its attraction: it is an enduring enigma, a riddle shrouded in the mists of time.

Which is probably why, when I visited the megalith at sunset with a local archaeologist some years ago, we saw a motley collection of people in robes praying to the stones. Two women bowed vigorously before the Sarsen stones, led by a man in flaxen robes.

It’s not surprising. Because of Stonehenge’s missing history, visitors may attach to it any meaning they choose — hence the Celtic revivalists known as Druids or those claiming Jesus Christ visited it in his ‘lost years’, aged 12 to 30.

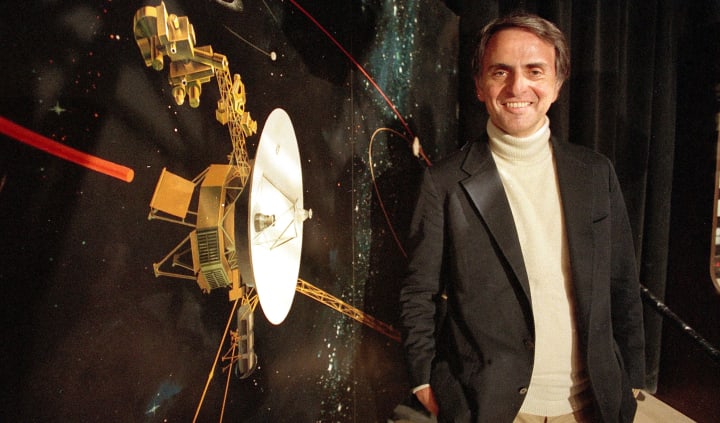

In Carl Sagan’s excellent ode to critical thinking, The Demon-Haunted World, the late astrophysicist provides a sceptical analysis of various superstitions, hoaxes and pseudosciences. He tries to understand why people are so attracted to fantastic explanations for strange phenomena, when evidence is scant or non-existent and so many rational options exist.

Sagan tells of the success of José Alvarez, a 19-year-old American ‘psychic’ who made a visit to Australia in 1988. Alvarez claimed to channel the soul of a 2,000-year-old healer known as ‘Carlos’ and could talk to the dead and heal terminal illnesses. In fact, he was completely fictional and created by an Australian TV show to illustrate how gullible people can be. He fooled thousands, and drew enthused crowds to the Sydney Opera House before he was revealed as a hoax.

The incident showed, Sagan wrote, “how little it takes to tamper with our beliefs, how readily we are led, how easy it is to fool the public when people are lonely and starved for something to believe in.”

Sagan was also extraordinarily prescient to foresee the world we live in today. An extract from that same book has been doing the rounds on social media for the past year or so — especially on Twitter — and it is uncanny in its predictive power:

“I have a foreboding of an America in my children’s or my grandchildren’s time — when the United States is a service and information economy; when nearly all the key manufacturing industries have slipped away to other countries; when awesome technological powers are in the hands of a very few, and no one representing the public interest can even grasp the issues; when the people have lost the ability to set their own agendas or knowledgeably question those in authority; when, clutching our crystals and nervously consulting our horoscopes, our critical faculties in decline, unable to distinguish between what feels good and what’s true, we slide, almost without noticing, back into superstition and darkness.”

Boy, was he right. We have a tendency as a species to fall prey to mass delusions, pseudoscience, hoaxes and the paranormal. Even when a hoax is exposed — as with ‘Carlos’ — some people continue to believe the original premise. From alien abductions to crop circles, from psychic surgery to homeopathy, the list is extensive, and new ones continually arise. Recently it’s been conspiracies around COVID-19 that have been spreading like wildfire: that it doesn’t actually exist; that it’s a plot by Big Pharma to sell vaccines; or that that COVID vaccines include a digital microchip that will track and control people. As Sagan noted, humans seem to be wired to easily fall prey to simpler, more comforting or more sinister explanations for the mysterious, the unexplained, the unexpected or the bizarre.

SO WHY DO PEOPLE fall for such delusions , when the evidence is so scant and unconvincing? Why do some people insist there’s a ‘face’ on Mars when it has been shown to be a trick of the light, or that crop circles are alien artefacts when the pranksters who created these ingenious hoaxes have shown how it was done?

Why do people persist in believing there was once an ancient advanced civilisation, now lost, known as Atlantis when scores of archaeologists say no shred of evidence exists? Or even that acupuncture, chiropractory and homeopathy can treat major ailments, when a wealth of reliable studies show otherwise?

We know human perception is prone to being unreliable: just listen to the testimony from different witnesses to a fire or a traffic accident and you’ll wonder if they are describing the same event. But why do so many people find themselves attracted to conspiracy theories, or the purported prophecies of a 16th century French apothecary Michel de Nostredame (better known as Nostradamus)?

Collective delusions do occur, and have done so throughout history: sociologists Robert Bartholomew and Erich Goode have detailed how false or exaggerated beliefs can often arise spontaneously, spread rapidly in a population, and temporarily affect a region, culture or a whole nation. They give countless examples, from the head-hunter rumour panic of March 1937 in the Indonesian island of Banda, which saw village streets deserted for weeks; to the Seattle windshield pitting epidemic of April 1954 — a mass delusion in which previously unnoticed windshield holes, pits and dings were ascribed to a group of roving vandals to, eventually, atomic bomb testing.

They are often dubbed (inaccurately, as it turns out) ‘mass hysteria’, and many factors contribute to the rise and spread of such collective delusions. These include rumours, extraordinary public anxiety, or excitement, shared cultural beliefs or stereotypes, and amplification of these by mass media or rumour mills, as well as reinforcing actions by authorities such as politicians, the police, or the military.

Charles Mackay, the Scottish journalist and editor of the Illustrated London News, chronicled just how prone people can be to suggestion in his 1841 book, Extraordinary Popular Delusions and the Madness of Crowds.

And it’s clear that what he describes is not just a subject of academic interest or fodder for dinner party repartee: modern instances have destroyed jobs, companies and even economies. The global financial crisis that reverberated through the world’s economies in 2008 began with wild, unfettered debt everyone knew was unsustainable as it relied on borrowers to repay amounts that were clearly beyond their means. Was this not a mass, collective delusion?

In economic booms and busts, from which we have cycled into and out of so many times, we can see this very same delusional behaviour at play repeatedly. Mackay reminds us that it is disturbingly familiar: at the peak of the ‘tulip mania’ in February 1637, he wrote, tulip contracts sold for more than 10 times the annual income of a skilled craftsman, and at one point, five hectares of land was offered in exchange for a single Semper Augustus tulip bulb.

From the fruitless, centuries-long study of the transmutation of elements into gold, to the burning of witches in Salem; from the 17th century craze of using magnets to cure ailments, to the 200 year-long military campaigns of the Crusades and their far-reaching political, economic, and social impacts — collective delusions have been a constant throughout history.

But they also play out individually, from tales of alien abductions — which are remarkably similar to accounts of demonic abductions in earlier centuries. These may all be explained by an ailment we are only beginning to understand: sleep paralysis. This is a state, during waking up or falling asleep, where people have awareness but are unable to move or speak. They may hallucinate — hear, feel, or see things that are not there — and episodes last usually last a few minutes. Up to 50% of people experience sleep paralysis at some point in their lives, and 5% have multiple episodes.

The ability of the unexplained and the unexpected to confuse and scare us, and our limitations in making rational sense of them, shouldn’t surprise us. “On the inside we are hunter-gatherers,” says physicist Robert Park, author of the book Superstition. “The brain that enables us to write sonnets and solve differential equations has changed little in 160,000 years. Science transported us to a world of jet travel and electronic communication with a brain still hard-wired with the instincts of savages who fought to survive in the Pleistocene wilderness.”

But there is hope: and it can be found in science. In Demon Haunted World, Sagan argues that the scientific method and the clarity it brings can help us overcome our fuzzy thinking. Only by thinking critically and clearly, he says, “is the means … by which deep thoughts can be winnowed from deep nonsense.” He argues “it is far better to grasp the universe as it really is, than to persist in delusion, however satisfying and reassuring.”

Sagan offers a set of tools for critical thinking, which he calls the ‘Baloney Detection Kit’, a nine-step process that helps separate the fallacious or fraudulent from reality:

1. Independent confirmation: Wherever possible, there must be independent confirmation of the stated ‘facts’.

2. Debate centred on evidence: Encourage substantive debate on the evidence by knowledgeable proponents of all points of view.

3. Authority is not evidence: Arguments from authority carry little weight — ‘authorities’ have made mistakes in the past. They will do so again in the future. Perhaps a better way to say it is that in science there are no authorities; at most, there are experts.

4. Consider alternate explanations: Spin more than one hypothesis. If there’s something to be explained, think of all the different ways in which it could be explained. Then think of tests by which you might systematically disprove each of the alternatives. What survives — the hypothesis that resists disproof in this Darwinian selection among ‘multiple working hypotheses’ — has a much better chance of being the right answer than if you had simply run with the first idea that caught your fancy.

5. Don’t be wedded to a single explanation: Try not to get overly attached to a hypothesis just because it’s yours. It’s only a way-station in the pursuit of knowledge. Ask yourself why you like the idea. Compare it fairly with the alternatives. See if you can find reasons for rejecting it. If you don’t, others will.

6. Quantify: If whatever it is you’re explaining has some measure, some numerical quantity attached to it, you’ll be much better able to discriminate among competing hypotheses. What is vague and qualitative is open to many explanations. Of course, there are truths to be sought in the many qualitative issues we are obliged to confront, but finding them is more challenging.

7. Be consistent: If there’s a chain of argument, every link in the chain must work (including the premise) — not just most of them.

8. Occam’s Razor: This convenient rule-of-thumb urges us when faced with two hypotheses that explain the data equally well, then choose the simpler. It’s much more likely to be correct.

9. Can a premise survive testing? Always ask whether the hypothesis can be, at least in principle, falsified. Propositions that are untestable, unfalsifiable are not worth much. Consider the grand idea that our universe and everything in it is just an elementary particle — an electron, say — in a much bigger cosmos. But if we can never acquire information from outside our universe, is not the idea incapable of disproof? You must be able to check assertions out. Inveterate skeptics must be given the chance to follow your reasoning, to duplicate your experiments and see if they get the same result.

Sagan’s nine simple rules allow us to detect the most common fallacies of logic and rhetoric, such as accepting an argument purely because it comes from someone in authority, or believing someone who proffers statistics of small numbers. There’s no need to be so credulous about the fantastical, when reality is already so fascinating and bizarre, he says: “There are wonders enough out there, without our inventing any.”

In truth, Sagan’s Baloney Detection Kit is really a thumbnail sketch of how science goes about its business, the essence of ‘the scientific method’ — essentially, a codified approach to critical thinking. Or, as Sagan so beautifully put it, science is merely “a candle in the dark”: each year, our discoveries illuminate a little more of the world around us, and we understand things that little bit better.

Ultimately, science is not an answer in itself: it’s merely the tool that helps us find the answers we seek.

>> Like this story? Click the ♥︎ below, or send me a tip. And thanks 😊

About the Creator

Wilson da Silva

Wilson da Silva is a science journalist in Sydney | www.wilsondasilva.com | https://bit.ly/3kIF1SO

Comments

There are no comments for this story

Be the first to respond and start the conversation.