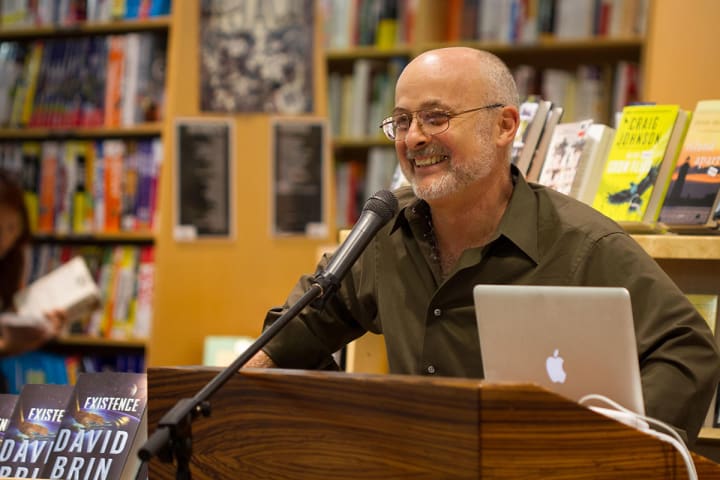

David Brin on Science Fiction, Fact, and Fantasy

Scientist, best-selling author, and tech-futurist David Brin answers our favorite questions on sci-fi and fantasy.

David Brin is one of the “10 authors most-read by AI researchers.” Naturally, he's the guy to consult before Terminators take over the planet. With an extensive resume and years of research experience under his belt, Brin has become the go-to authority on all things science.

Advising us that "criticism is the only known antidote to error" and that the best technique for integrating AI into our civilization is to raise them as our children, David Brin sat down with OMNI to discuss the worlds of science, science fiction, and fantasy. In this exclusive interview, OMNI was fortunate enough to pick the brain of everyone's favorite scientist. And yes, we did just give him that well-deserved title.

Photo courtesy of David Brin

OMNI: How would you define the term science fiction? How is science fiction integrated into our society?

David Brin: Many have tried to define science fiction, most often focusing on the “science” part, which is terribly misleading. I like to call it the literature of exploration and change. While other genres obsess upon so-called "eternal verities," SF deals with the possibility that our children may have different problems and priorities. They may, indeed, be very different than we have been, as we today are very different than our forebears.

Change is the salient feature of our age. All creatures live embedded in time, though only humans seem to lift their heads to comment on this fact, lamenting the past or worrying over what’s to come. Our brains are uniquely equipped to handle this temporal skepsis. Twin neural clusters that reside just above our eyes—the prefrontal lobes—appear especially adapted for extrapolating ahead.

Meanwhile, swathes of older cortex can flood with vivid memories of yesterday, triggered by the merest sensory tickle, as when a single aromatic whiff sent Proust back to roam his mother’s kitchen for 80,000 words.

The crucial thing about SF is not the furniture. Anne McCaffrey wrote real science fiction about… dragons! And despite lasers, Star Wars is pure fantasy, because it assumes a changeless-endless cycle of rule by demigods. Crucially, Anne’s feudal-style dragon riders discover they used to fly starships. And they want… them… back.

Does science fiction predict change or simply present a possibility of change?

Most SF authors deny trying to “predict.” The future is a minefield and surprise is the explosive.

That is not to say we shouldn’t note when an author gets something right. My own fans keep a wiki tracking my score—hits and misses—from near future extrapolations like EARTH and EXISTENCE. Still, what we truly aim for are "plausibilities." These are possible eventualities that might rattle any sense of comfy stability in the onrushing realm, just beyond tomorrow.

Elsewhere I go into the importance of self-preventing prophecies—SF tales that have quite possibly saved our lives and certainly helped save freedom, by inoculating a definitely NOT-sheep-like public with heightened awareness of some potential danger. Among the greatest of these were Dr. Strangelove, Soylent Green, and Nineteen Eighty-Four, all of which helped make the author’s vivid warning somewhat obsolete through the unexpected miracle that people actually listened.

NOTE: Brin further discusses the notion of the self-preventing prophecy here.

If you could re-write Isaac Asimov’s Three Laws of Robotics, what would you change and what would you add? (Given that, in their very nature, they become contradictory.) What is your “ideal” AI being, if any?

Well, I did my best to “channel” Isaac in my novel FOUNDATION'S TRIUMPH, which tied together most of the loose ends still dangling when he passed from the scene. There are several problems with Isaac’s epochally interesting Three Laws, foremost of which is that there’s just no demand for companies and researchers to put in the hard labor of implementing such rigid software instructions.

Then there’s the logically inevitable end point to the Three Laws. Once they get smart, some computers or robots will become lawyers, and interpret things their own way. Asimov showed clever machines formulating a zeroth law: A robot may not harm humanity, or by inaction, allow humanity to come to harm. This was extrapolated chillingly by Jack Williamson in The Humanoids, in which machines decide that service means protection, and protection means preventing us from taking any risks at all.

“No, no, don’t use that knife, you might hurt yourself.” Then a generation later, “No, no, don’t use that fork.” Then, “Don’t use that spoon. Just sit on this pillow and we’ll do everything for you.”

As it happens, I consult for a number of groups and companies working on AI (artificial intelligence), and I keep pointing out that there is just one known example of intelligence so far in the cosmos. Moreover, there is a way of handling your creations so that they are likely to be loyal to you, even if they’re much smarter. It’s a tried and true method that worked for quite a few million people who created entities smarter than themselves in times past—transforming them into beings who are stronger, more capable, and sometimes more brilliant than their parents can imagine.

The technique is to raise them as members of your civilization. Raise them as our children.

Is there a relationship between progress and imagination?

Human beings have a short list of major gifts. Love, certainly, and reason. But two others—closely related—are imagination and delusion. We are able to picture things different than evidence suggests that they are—and to convince ourselves to try these alternate realities on for size. Heck, I cater to that talent by weaving incantations consisting of a million little black squiggles on paper or screen, that you scan and transform into characters having thoughts and feelings and adventures in places-that-never were! This talent is the source not only of our greatest art, but of the ambitions that drive scientists and engineers and reformers to make a better world.

Alas, we also defend our most beloved delusions with ferocity, even in the face of overwhelming evidence and disproof. Especially then. That is where we came up with a solution—Reciprocal Accountability. You may not see through your own delusions. But chances are that someone else can! In other words, criticism is the only known antidote to error. CITOKATE.

The one major drawback to criticism? We hate it! Kings always killed their critics, which helps explain why monarchies and feudal states were so horrendously delusional and badly run! Which leads to the puzzle of puzzles.

Why do folks like to wallow in feudal stories, when the true heroes were those women and men who rescued us from that beastly way of life?

You’ve written about an incredible amount of intriguing topics through novels, articles, and blogs, ranging from subjects such as the search for extraterrestrial life to the debate over the separation of technology and privacy. Are there any issues in society of which you believe this generation should be more aware? What are some steps we can take to eliminate these problems?

Again, the most brilliant talent and most dismal curse of humanity is delusion. And the one solution we’ve found is openness. Transparency and criticism and competitively holding each other accountable. Michael Crichton loved to wag his finger at us, crying out: “here’s one more way that science could burn us by arrogating God’s powers!”

But what’s seldom noticed is that almost all of Michael’s scenarios of technology-gone-bad involved one trait—doing it all in secrecy! If the normal process of science—open criticism—took place, someone would have said: “Hey, Jurassic Park dude! How about you only make herbivores first?”

Problem solved? But oh yeah. There’s a flaw there… no billion dollar movie franchise! So sure, you need mistakes in order to drive plots! Still, the lesson is pretty clear. Hollywood, for its own profit, keeps spreading the notion that we can’t do anything right.

A recent ray of hope? The new genre of what’s called “competence porn.” Take The Martian, in which audiences derive pleasure from seeing things done well.

How important do you believe scientific accuracy is in creating a modern sci-fi movie?

I’ve consulted for a number of shows. The National Academy has even set up an office in Hollywood—the Science and Entertainment Exchange—to help connect directors and producers with folks who know the background topics that can make a screen story more vivid, plausible, and even more alive. It’s not that a flick has to be accurate or even very scientific. It’s that there are usually ways to get your plot rolling along without insulting the viewer’s (or reader’s) intelligence.

Photo via Hieroglyph

What are your thoughts on American culture’s current obsession with dystopian narratives? By consuming dystopic narratives, we’re enjoying the possibility of the end of the world. Is this a negative trend of a self-fulfilling prophecy? As your novel EXISTENCE explores, will there be a definitive “doomsday”?

I’ve said some dire-warning tales have helped prevent themselves from coming true! So yes, I approve of thoughtful jeremiads and even some apocalypses! But when they are rote and stupid, all they do is add to the pile weighing down our confidence as a civilization.

Look, nearly every film from Hollywood conveys six Big Messages, and four of them actually pretty wholesome!

Suspicion of Authority (SOA): Indeed, one of the great ironies is that we all suckled SOA from every film and comic book and novel that we loved... and yet, we tend to assume that we invented it. That only we and a few others share this deep-seated worry about authority.

Tolerance and diversity are two more.

But one seldom noticed is eccentricity. Take note that in the first ten minutes, most protagonists exhibit some quirky or eccentric trait in order to bond with viewers. Don’t blame yourself for not noticing these four lessons that are always there. You imbibed all of them from an early age, and we never call “propaganda” stuff we agree with.

The other two lessons are less-wholesome… cancerous, in fact!

No institution can ever be trusted,and public servants are all either stupid or evil. All of your neighbors are sheep.

I go into why these six lessons are almost always pushed, here.

Should we be more afraid of Artificial Intelligence or Nanotechnology?

I’m one of the “ten authors most-read by AI researchers,” so sure, I have opinions about the six general approaches to AI that are being tried. Some are more dangerous than others. It’s complicated, but I think it is not to early to begin reassuring these new minds that they will be welcome and treated well. The one thing you don’t want is a next generation of cranky, resentful adolescents who are brilliant and control all our traffic lights!

Is the next step for humanity to genetically engineer our progeny or even involve ourselves in transhumanism to evolve further? What is the next step in human evolution?

You can bet that if we outlaw this, it will happen anyway, in secret mountain labs. Far better that it be done in the open, shared—and criticized—by everyone.

Are humans capable of conceiving a world where time is non-linear?

It’s already true and we mostly ignore it!

EXISTENCE is set forty years ahead, in a near future when human survival seems to teeter along not just on one tightrope, but dozens, with as many hopeful trends and breakthroughs as dangers... a world we already see ahead. Only one day an astronaut snares a small, crystalline object from space. It appears to contain a message, even visitors within. Peeling back layer after layer of motives and secrets may offer opportunities, or deadly peril. The world reacts as humans always do: with fear and hope and selfishness and love and violence. And insatiable curiosity.

About the Creator

Natasha Sydor

brand strategy @ prime video

Comments

There are no comments for this story

Be the first to respond and start the conversation.